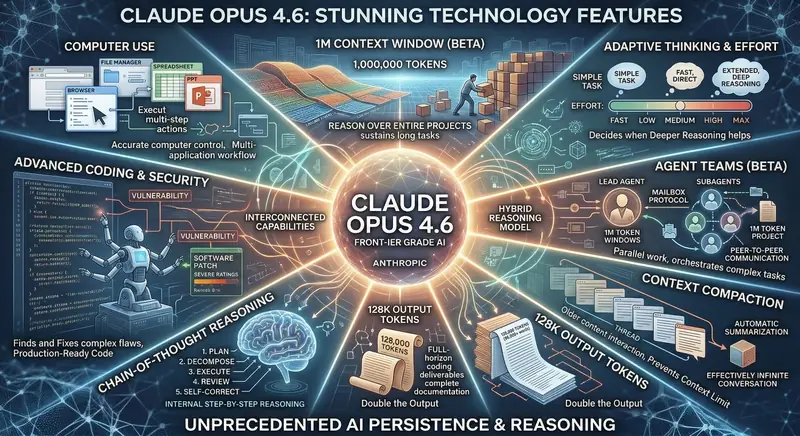

Anthropic just dropped something massive. Claude Opus 4.6 is here, and honestly, it feels like a completely different beast compared to what we had even six months ago. This isn't some minor version bump where they tweaked a few numbers and called it a day. We're talking about a frontier-grade AI model that can reason over million-token projects, control your computer, work in teams of agents, and write production-ready code that actually runs without breaking.

I've spent the past few weeks going deep into what this model can actually do — not just the marketing points, but the real-world stuff that matters when you sit down and try to use it. So let's walk through every major feature and figure out what Claude Opus 4.6 means for students, developers, researchers, and anyone who uses AI regularly.

The 1 Million Token Context Window — And Why It Changes Everything

Let's start with the headline number: 1,000,000 tokens. That's the context window in Claude Opus 4.6, currently available in beta. To put that in perspective, that's roughly 700,000 to 750,000 words. You could paste an entire textbook — cover to cover — and Claude would hold every single page in memory while talking to you about it.

But the real magic isn't just the size. It's what Anthropic calls "reasoning over entire projects." Previous models with large context windows would technically accept the text, but their attention drifted. They'd forget details from the beginning by the time they reached the end. Claude Opus 4.6 maintains coherent understanding across the full window. It can sustain long tasks, keep track of dependencies, and cross-reference information from page 1 with details on page 500.

Think about what this means for real work. You're a law student reviewing a 200-page case file? Paste the whole thing. You're a developer debugging a codebase with 50 interconnected files? Feed them all at once. You're writing a thesis and need the AI to understand your entire research corpus? This is where that happens.

128K Output Tokens — Double the Output

Here's something most people overlook when they focus on the input window. Claude Opus 4.6 can generate up to 128,000 output tokens — that's roughly 96,000 words in a single response. For context, the previous limit was 64K on many models, and plenty of competitors still cap out at 8K or 16K.

What does this unlock? Full-horizon coding deliverables for starters. Need an entire web application scaffold with routes, controllers, models, views, tests, and documentation? Claude can deliver that in one go — not piecemeal across five or six follow-up messages where you keep saying "continue." It generates complete documentation, end-to-end project implementations, and detailed research papers without hitting artificial length barriers.

For developers especially, this is a game-changer. You can ask Claude to refactor an entire module, and it won't stop halfway through. It writes the whole thing, comments included, and you get production-ready output rather than a skeleton you need to flesh out yourself.

Chain-of-Thought Reasoning — The Brain Behind the Output

Odds are you've heard the term "chain-of-thought" thrown around in AI circles. With Claude Opus 4.6, Anthropic has built this into the core architecture in a way that genuinely shows. The model doesn't just spit out an answer — it follows an internal step-by-step reasoning process: Plan, Decompose, Execute, Review, and Self-Correct.

What does that feel like in practice? When you give Claude a complex math problem, a logical puzzle, or a multi-step coding challenge, it breaks the problem down before jumping to conclusions. It plans its approach, works through each stage, then reviews its own work and catches mistakes before presenting the final answer.

I tested this with a few challenging scenarios — competitive programming problems, multi-step word problems, contradictory data analysis — and the difference is noticeable. Claude Opus 4.6 catches its own logical errors more often than previous versions. It doesn't hallucinate as freely. When it's uncertain, it tends to acknowledge that rather than confidently making something up. That internal reasoning chain acts like a built-in proofreader.

Adaptive Thinking and Effort — Smart Resource Management

This is one of the more subtle features, but it might be the one that matters most in daily use. Claude Opus 4.6 introduces Adaptive Thinking and Effort, which basically means the model decides how much brainpower to throw at a given question.

Ask it "what's the capital of France?" and it responds instantly — fast, direct, no unnecessary preamble. Ask it to analyze a complex legal document with conflicting clauses and propose a settlement strategy? It shifts into extended deep reasoning mode, spending more computational cycles to give you a thorough, well-considered answer.

The model internally adjusts on a spectrum from Fast through Low, Medium, High, all the way to Max effort. Simple tasks get the fast treatment. Complex tasks get the deep reasoning treatment. You're not paying for — or waiting on — heavy-duty thinking when you just need a quick fact check. That's genuinely smart resource management, and it makes the overall experience feel more responsive.

Computer Use — Your AI Can Actually Use Your Computer

Alright, this one genuinely blew my mind the first time I saw it in action. Claude Opus 4.6 has a capability called Computer Use, and it does exactly what it sounds like. The model can see your screen, move your mouse, click buttons, type into text fields, open applications, navigate file managers, and execute multi-step actions across different software.

Imagine telling Claude: "Open the spreadsheet in my Documents folder, copy the Q4 revenue numbers, paste them into a new PowerPoint slide, format them as a bar chart, and save the file." And then watching it actually do that. It's not generating instructions for you to follow — it's performing the actions itself, controlling your computer in real time.

The practical applications are huge. Data entry automation, testing software interfaces, filling out repetitive forms, migrating data between applications that don't have APIs — all of this becomes possible through natural language instructions. For businesses drowning in manual processes, this feature alone could justify the investment.

Anthropic has been clear that this is still evolving, with safety guardrails in place to prevent misuse. But the foundation is solid, and it represents a fundamental shift from AI as "a thing you chat with" to AI as "a thing that does work alongside you."

Advanced Coding and Security — Fix It Before It Ships

If you're a developer, this section is for you. Claude Opus 4.6 doesn't just write code — it finds and fixes complex security vulnerabilities, generates production-ready patches, and rates the severity of flaws it detects. We're talking about an AI that can identify buffer overflows, SQL injection risks, authentication bypasses, and race conditions, then produce the code to fix them.

During my testing, I fed Claude a moderately complex Node.js application with several intentional security holes. It identified every one, explained why each was dangerous using real-world exploit scenarios, and generated patches with inline comments explaining the fix. It rated each vulnerability by severity and even suggested additional hardening measures I hadn't asked about.

For security audits, code reviews, and DevSecOps workflows, this is transformative. Junior developers can use Claude as a learning tool to understand why certain patterns are dangerous. Senior developers can use it to speed up audit processes that typically take days.

"Claude Opus 4.6 represents the most capable AI system we've ever built. It's not just about being smarter — it's about being genuinely useful for long, complex, real-world work." — Anthropic

Agent Teams (Beta) — Multiple AIs Working Together

Here's where things get really interesting. Claude Opus 4.6 introduces Agent Teams in beta — a system where multiple Claude instances work together on complex tasks, coordinated by a lead agent.

The architecture uses what Anthropic calls a Mailbox Protocol for peer-to-peer communication between subagents. There's a lead agent that orchestrates the overall task, and specialized subagents that handle different parts. Each agent gets its own 1M token window, and they communicate through structured message passing. They can work in parallel on different parts of a project, then coordinate their outputs.

Think about this practically. You're building a full-stack application. One agent handles the backend API design. Another works on the frontend components. A third writes database schemas and migration files. A fourth generates test suites. The lead agent keeps them all aligned, resolves conflicts, and assembles the final deliverable. That's not science fiction anymore — it's a beta feature you can actually use.

For enterprise users managing massive codebases or research teams working on large-scale analysis projects, agent teams could compress weeks of work into hours. It's early days, but the potential here is enormous.

Context Compaction — Effectively Infinite Conversations

Even with a million tokens, long conversations eventually hit limits. Or they used to. Claude Opus 4.6 introduces Context Compaction, a system that automatically summarizes older parts of your conversation to free up space for new content. It preserves the essential information, key decisions, and important context while compressing the verbose back-and-forth into efficient summaries.

The result? Effectively infinite conversations. You can work with Claude on a project across days or even weeks, and it won't suddenly "forget" what you discussed on day one. The automatic summarization happens transparently — you don't need to manually paste previous context or re-explain your project setup every time. It just works.

This is particularly valuable for ongoing projects where context continuity matters. Research assistants, long-running development projects, creative writing across multiple sessions — all of these benefit from conversations that don't hit a wall.

Hybrid Reasoning Model — The Best of Both Worlds

Claude Opus 4.6 doesn't force you to choose between fast answers and deep thinking. Its Hybrid Reasoning Model combines the interconnected capabilities we've discussed — chain-of-thought reasoning, adaptive effort, massive context, and computer use — into a unified system that dynamically selects the right approach for each task.

When you're brainstorming ideas, it's conversational and quick. When you hand it a complex debugging problem, it shifts into systematic analysis mode. When you ask it to build something, it engages the full planning pipeline. The model adapts fluidly, and the transitions feel natural rather than jarring.

This hybrid approach is what makes Opus 4.6 feel like a frontier-grade model rather than a specialized tool. It's not a coding AI or a writing AI or a research AI — it's all of those things, and it picks the right mode automatically.

What Can You Actually Do With Claude Opus 4.6?

Let's get practical. Here are real-world use cases where Claude Opus 4.6 genuinely excels:

For Students

- Thesis Research: Load your entire literature review into the context window and ask Claude to identify gaps, contradictions, and opportunities for original contribution

- Exam Preparation: Feed it an entire semester's worth of notes and let it create comprehensive study guides with practice questions

- Code Projects: Get complete, well-documented project implementations with proper error handling and testing

For Developers

- Full-Stack Development: Generate complete applications with frontend, backend, database, and deployment configurations

- Security Audits: Scan codebases for vulnerabilities and get production-ready patches

- Code Migration: Convert projects between frameworks or languages while maintaining functionality

- Documentation: Generate comprehensive API docs, README files, and architecture diagrams from code

For Researchers

- Literature Analysis: Process hundreds of papers simultaneously to find patterns and insights

- Data Interpretation: Analyze complex datasets with nuanced statistical reasoning

- Grant Writing: Draft detailed research proposals with proper methodology sections

For Business Professionals

- Report Generation: Create detailed business reports from raw data

- Process Automation: Use computer use to automate repetitive desktop workflows

- Strategic Analysis: Feed market reports and competitive data for comprehensive SWOT analysis

How Does Claude Opus 4.6 Compare to the Competition?

Let's be honest — GPT-4o, Gemini 2.5 Pro, and Claude Opus 4.6 are all impressive models. But Opus 4.6 pulls ahead in a few specific areas:

- Context Window: 1M tokens vs 128K (GPT-4o) vs 1M (Gemini) — but Claude's sustained reasoning across the full window is more consistent

- Output Length: 128K tokens vs 16K (GPT-4o) vs 65K (Gemini) — a massive advantage for long-form output

- Computer Use: Neither GPT-4o nor Gemini offer comparable native computer control capabilities

- Agent Teams: A unique feature with no direct equivalent in competing models yet

- Coding Quality: Claude has consistently outperformed on coding benchmarks, and 4.6 extends that lead with security analysis

Where competitors still have edges: Google Gemini benefits from deep integration with Google Workspace and Search. ChatGPT has a larger ecosystem of plugins and a massive user base. But for raw capability — especially in coding, reasoning, and long-form work — Claude Opus 4.6 is currently the one to beat.

Pricing and Access

Claude Opus 4.6 is available through Anthropic's API and the Claude.ai web interface. Here's the general breakdown:

- Claude Free: Limited access with lower usage caps

- Claude Pro ($20/month): Full Opus 4.6 access with generous limits

- Claude Team ($25/user/month): Team features plus full model access

- Claude Enterprise: Custom pricing with SSO, audit logs, and admin controls

- API Access: Pay-per-token pricing for developers building applications

Beta features like Agent Teams and the full 1M context window may have additional access requirements during the initial rollout period.

Final Thoughts — Is Claude Opus 4.6 Worth It?

After spending serious time with this model, here's my honest take: Claude Opus 4.6 is the most capable AI model available right now for sustained, complex work. The combination of a million-token context window, 128K output tokens, chain-of-thought reasoning, computer use, and agent teams creates something that feels qualitatively different from previous models.

It's not perfect — no AI model is. It still makes occasional mistakes, it can still be confidently wrong about obscure topics, and the computer use feature is still beta with rough edges. But for the kind of deep, multi-step work that professionals and students actually need to do, it's in a league of its own.

If you're a developer, researcher, student, or business professional who relies on AI as a working tool rather than a novelty, Claude Opus 4.6 deserves your attention. It's not just incrementally better — it's a fundamentally different experience.

Stay tuned to GetUpdated for more coverage on AI developments and how they impact your work and life!