If you are a student developer and opened Copilot this week thinking, "Wait, where did Claude and other premium model choices go?", you were not imagining it. A lot changed on March 12, 2026. But one thing did not: GitHub says free Copilot access for verified students is still here.

The confusion came from how the update was communicated versus how it felt in the product. Officially, this is a plan and packaging transition to keep Copilot sustainable for a global student user base. Practically, students noticed model menu changes first. And when model choices change overnight, it feels personal, especially if your workflow depended on a specific model for coding, debugging, or writing docs.

This guide breaks down exactly what happened, why GitHub is doing this, what "removed for self-selection" really means, and how students can adapt without losing momentum in semester projects, internships, and hackathon builds.

Quick Summary: What Changed on March 12, 2026

Here is the short, accurate version before we go deep:

- Verified students were moved under a new GitHub Copilot Student plan.

- Academic verification status stays the same, and users did not need to re-apply.

- Premium request unit entitlements were stated to remain unchanged at launch.

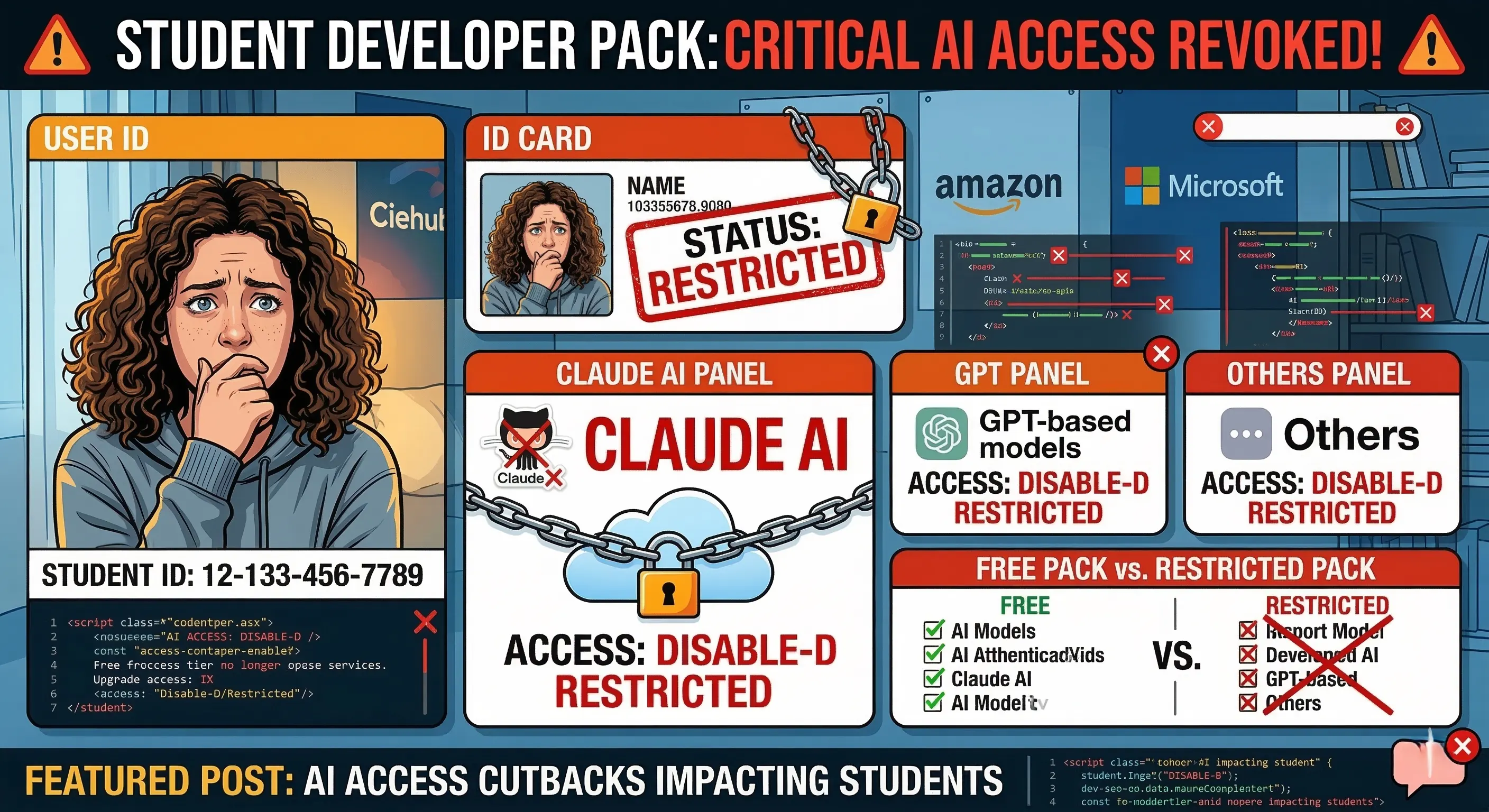

- Some premium model options are no longer available for manual self-selection under the student plan, including GPT-5.4 and Claude Opus/Sonnet.

- Auto mode remains available and can still route tasks through providers such as OpenAI, Anthropic, and Google.

- GitHub indicated more testing-driven adjustments could follow, including model availability and usage limits.

So no, Copilot was not removed from the Student Developer Pack experience. But yes, direct access patterns changed in ways that students can feel immediately.

Why GitHub Made This Move

GitHub's message to students is straightforward: the student community is large, global, and growing fast. Nearly two million students are using Copilot, according to the update. As Copilot adds new capabilities and expensive model options, the company says it must rebalance access to keep the program free at scale.

This is a classic platform tradeoff. If a product promises free access to millions of users, it must control cost variability somewhere. In AI products, cost variability often comes from two things: high-end model usage and unpredictable peak demand. Restricting direct self-selection of costly models while preserving "Auto" routing is one way to flatten those spikes without fully removing advanced intelligence paths.

From a business operations perspective, the logic is understandable. From a student's workflow perspective, the pain is also understandable. Both things can be true at the same time.

What "No Longer Available for Self-Selection" Actually Means

This line matters because many people read it as "model removed forever." That is not exactly what was said. The update specifies that premium models are no longer available for direct manual selection under the student plan. In parallel, GitHub says Auto mode still uses a strong set of models from multiple providers, including Anthropic.

In simple terms:

- Before: you might pick a specific premium model from the menu for each task.

- Now: direct premium pick options are reduced for students, but Auto can still route tasks to supported providers based on system logic.

This distinction is important for students who do competitive programming, large codebase refactoring, or deeply structured writing. Your model control surface changed, but not necessarily your total intelligence ceiling in every scenario.

What Students Are Actually Feeling Right Now

The student reaction is not only about "features lost." It is about trust and predictability. Most student developers run on tight timelines: assignment deadlines, limited lab slots, interview prep windows, and hackathon weekends where every hour matters. If tooling changes suddenly, even small interface shifts can trigger real productivity drops.

The most common concerns we are seeing are:

- "Will my coding quality drop if I cannot manually force a model I trust?"

- "If more limits are coming, should I migrate to another workflow now?"

- "Is this temporary testing or the new normal for student access?"

- "How do I prepare for internships where teams use different model policies?"

These are valid questions. The update itself says more testing may happen in coming weeks, which means students should optimize for resilience, not one static setup.

How to Adapt Without Losing Momentum

If your old workflow depended on manually pinning one model, here is a practical adaptation playbook that works well for coursework and project delivery:

1. Design prompts for portability, not one model personality

Instead of relying on "model X style," write prompts that include clear constraints: input context, expected format, edge cases, test expectations, and output boundaries. Better prompt structure reduces dependence on model-specific quirks.

2. Use Auto mode strategically

Auto mode is not just a fallback. It can be strong for mixed workflows where tasks vary by complexity. For example, use Auto for general coding flow, then tighten your instructions when handling architecture decisions, bug triage, or production-grade refactoring.

3. Add a verification loop

Whatever the model path, always run a quick human verification routine:

- compile or run tests,

- check edge cases,

- scan for security mistakes,

- confirm style and naming consistency.

This keeps output quality stable even if model routing changes behind the scenes.

4. Keep one backup assistant stack

For critical deadlines, keep a secondary AI setup ready for emergency comparison. The point is not to switch everything. The point is risk control when policies or menus shift during high-stakes weeks.

For Student Hackathons: A Realistic Workflow Under the New Plan

Hackathons are where tool policy changes hurt the most. Here is a tested workflow that works even when direct model selection is limited:

- Define a strict architecture outline before writing code.

- Use Auto mode for boilerplate generation and integration tasks.

- Assign one teammate to validation: tests, lint, and dependency sanity checks.

- Use short, explicit prompts with acceptance criteria in every request.

- Document decisions as you go so model output variance does not derail team context.

When teams treat AI as an accelerator inside a disciplined process, the impact of model menu changes drops sharply.

What This Means for Career Readiness

There is a hidden upside to this moment. Students who learn to work across changing AI access policies become more employable. In industry, model governance changes often. Enterprises rotate providers, restrict model tiers, and enforce policy gates. Engineers who can still ship clean code under shifting constraints stand out quickly.

If you are a student preparing for internships or entry-level roles, practice these now:

- write model-agnostic prompts,

- verify every generated output,

- document assumptions,

- separate ideation from implementation,

- treat AI as collaborator, not oracle.

This is exactly the mindset hiring teams are starting to value in 2026.

Will GitHub Bring Premium Self-Selection Back for Students?

No public timeline promises that. The official communication emphasizes ongoing testing and feedback loops before broader changes. That means the most practical posture is: assume iterative changes will continue and build workflows that tolerate those changes.

If GitHub sees strong evidence from students that a specific model access pattern is essential for learning outcomes, capstone projects, or accessibility needs, future adjustments are possible. But that only happens when feedback is structured and specific.

How to Give Feedback That Actually Influences Product Decisions

Saying "this update is bad" is understandable, but low impact. Product teams act faster on precise reports. If you want your voice to count, provide:

- your use case: assignment type, stack, team size, and deadline pressure,

- what changed in your workflow after March 12,

- what metric got worse: time-to-first-draft, bug count, iteration speed, confidence,

- a concrete request: restore specific option, increase budget in scenario X, or add an education-specific toggle.

Actionable feedback beats emotional feedback when platform policy is in flux.

Important Clarity: This Is Not a Full "Claude Ban" in Copilot

A lot of social posts are using words like "disabled" or "banned." The accurate framing is narrower: selected premium models are not available for manual student self-selection under the new student plan. GitHub also says Auto mode still includes models from Anthropic, OpenAI, and Google.

Language matters because students make planning decisions from headlines. If the headline overstates the change, people may panic-migrate when they do not need to. If the headline understates it, people get surprised on deadline day. The best approach is factual precision.

Final Verdict: What Student Developers Should Do Today

GitHub's student commitment is still alive, but access mechanics have clearly shifted. This update is less about removing student Copilot and more about controlling cost while preserving global reach. For students, that means one new reality: flexibility is now part of AI literacy.

Here is the practical bottom line:

- Keep using Copilot if it still supports your core coding flow.

- Build model-agnostic prompting habits now.

- Treat Auto mode as primary, not secondary.

- Keep a backup assistant path for critical deadlines.

- Send structured feedback with measurable workflow impact.

Student developers who adapt early will not just survive these platform shifts. They will outperform peers who rely on one fixed model setup. In 2026, the most valuable skill is no longer "knowing one AI tool." It is being able to build, debug, and ship reliably while AI tooling policies evolve in real time.

Copilot for students is still free, but it is entering a managed access era. The winners will be students who optimize process quality, not just model preference.